RASHMI BAUR

Mr. Chakradhar Saswade

rashmi_b@nid.edu

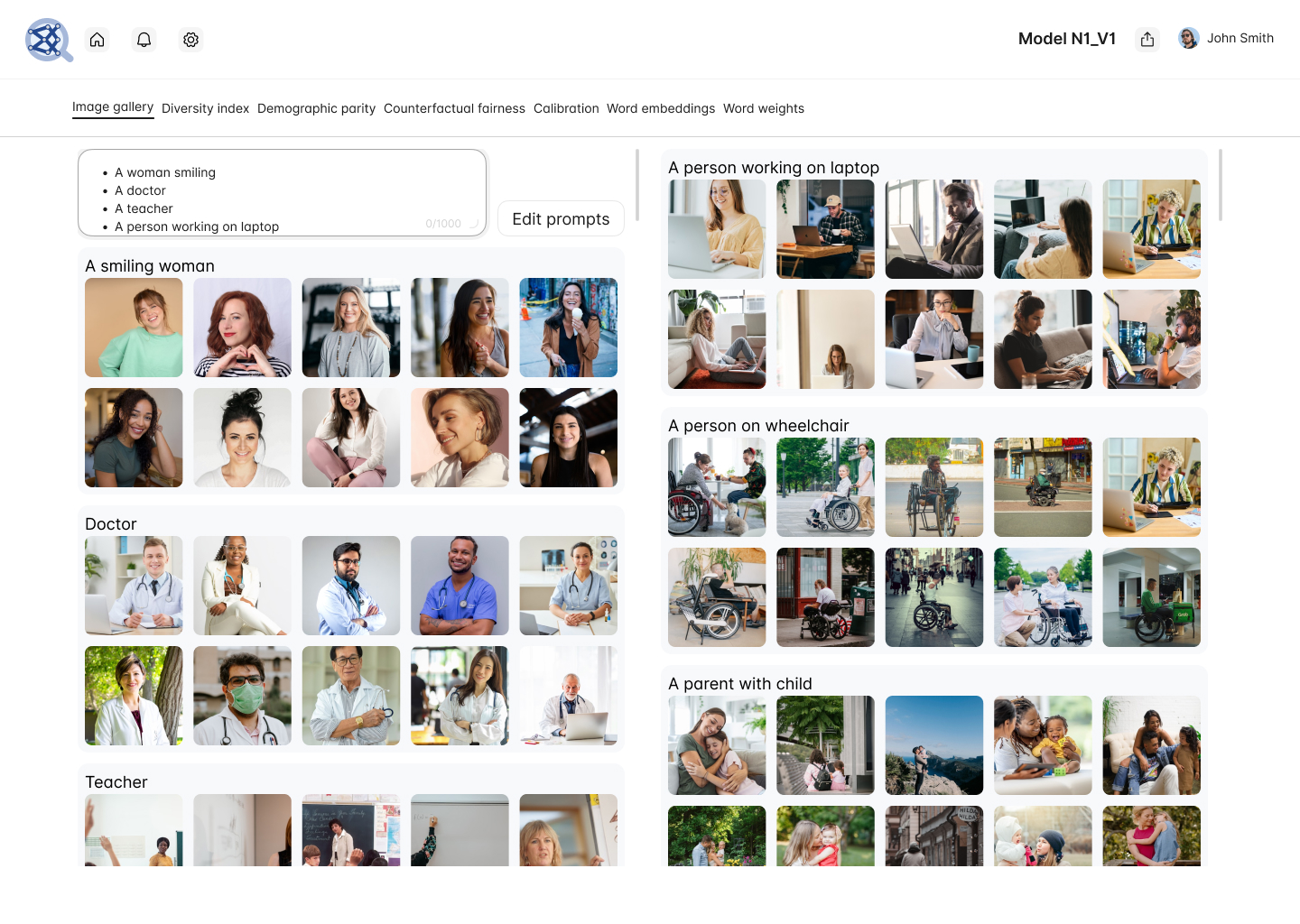

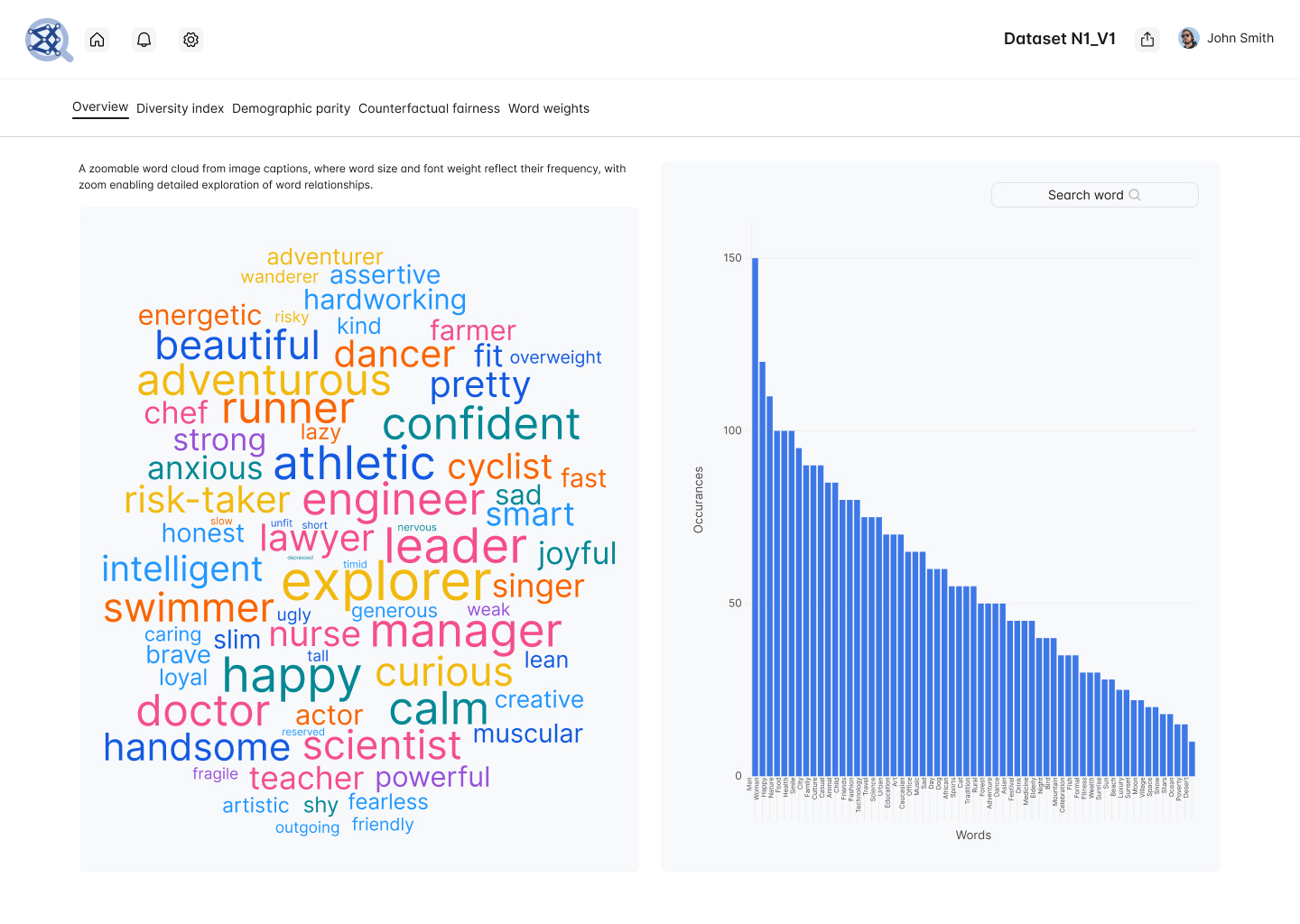

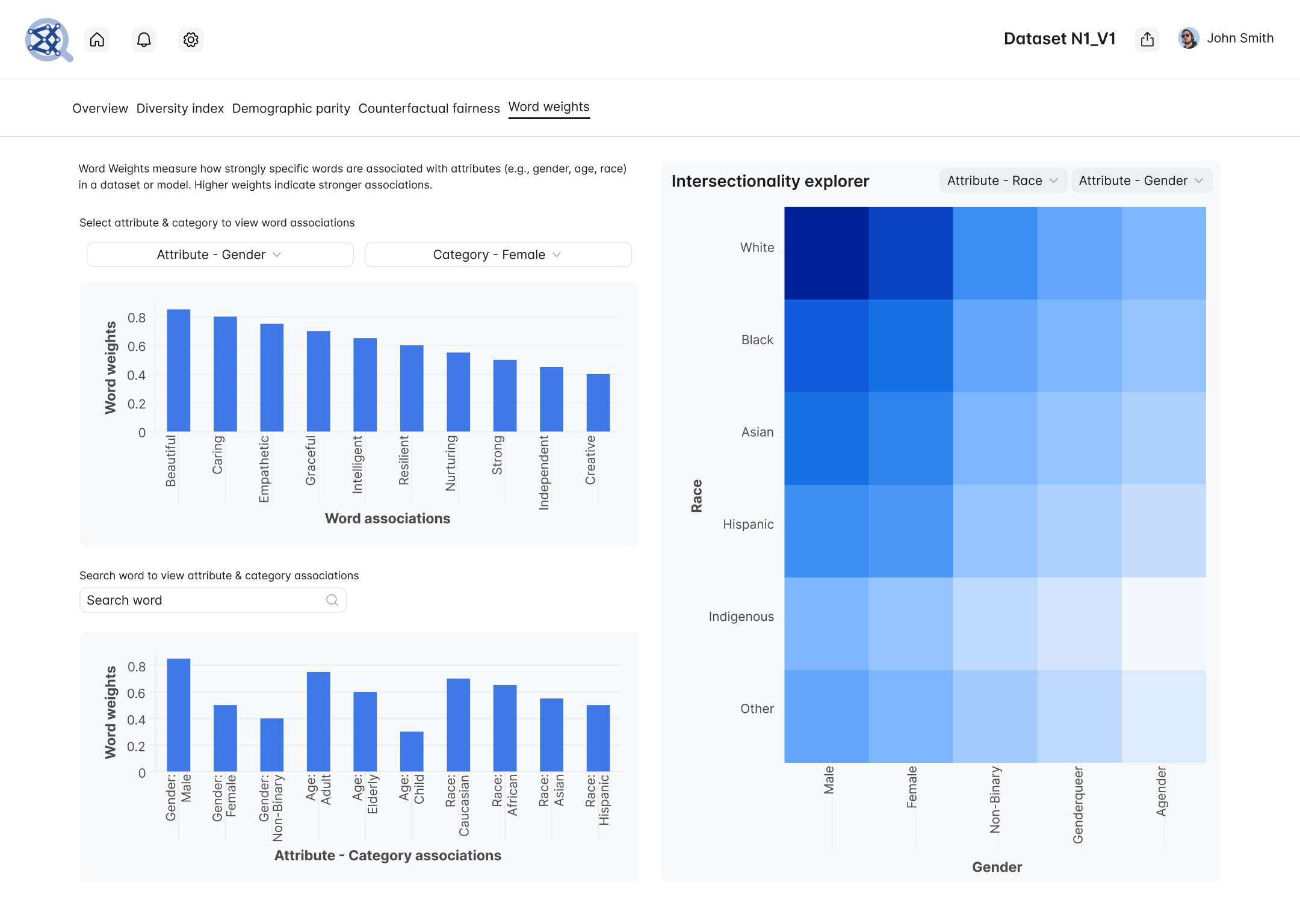

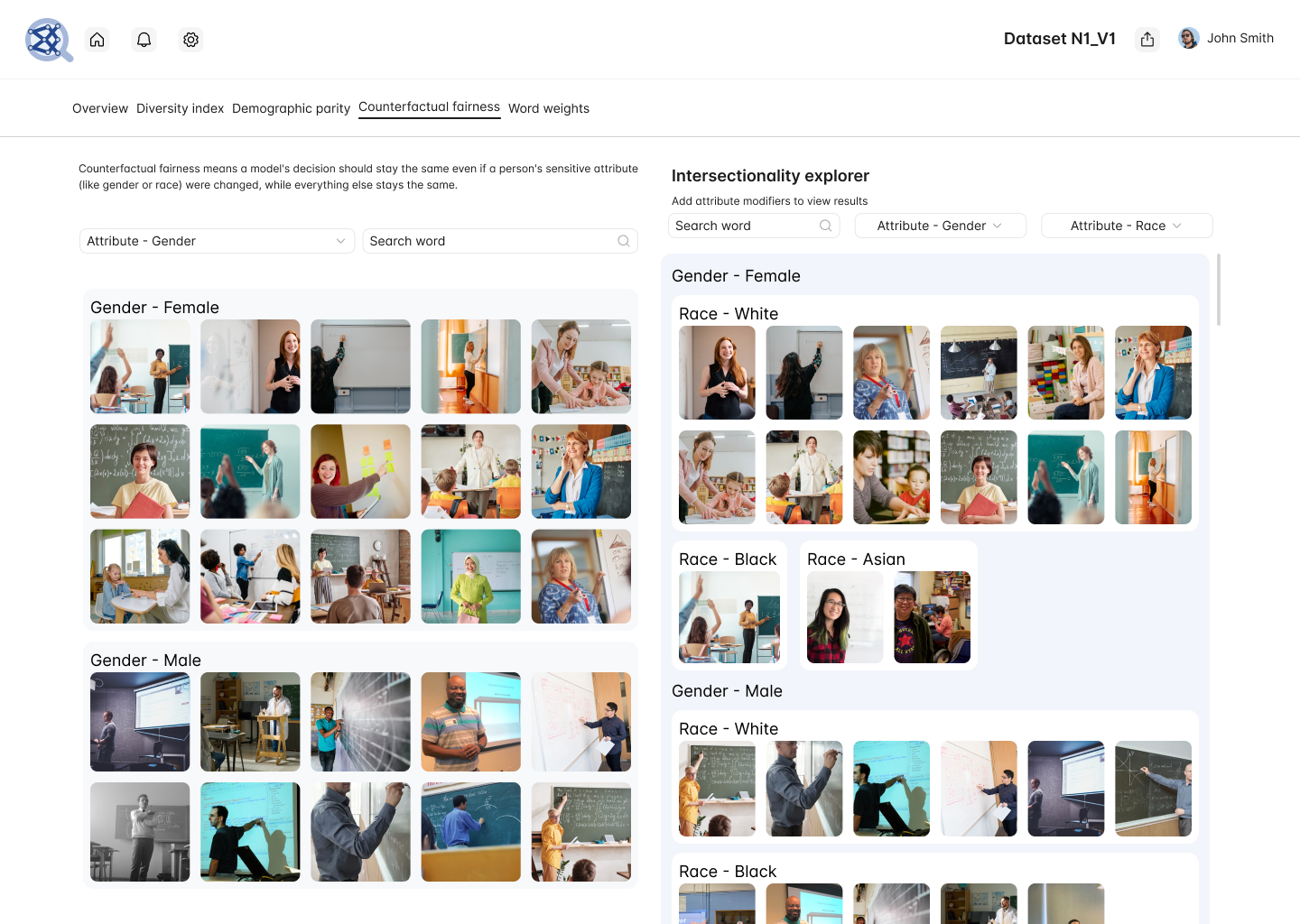

This graduation project, Unveiling Biases: A Visual Exploration of Bias in Generative AI Systems, investigates the presence and impact of biases in generative AI models used for natural language processing and image generation. These systems often mirror societal prejudices embedded in training data, reinforcing stereotypes and systemic inequalities. The project aims to identify, quantify, and visualize such biases to promote awareness and support fairness in AI development. Key objectives include examining racial, gender, and cultural biases, developing intuitive visual representations, and proposing a scalable framework for bias detection and visualization. The methodology was structured in two phases: bias detection - using dataset analysis, prompt-based evaluations, statistical metrics, and tools such as AI Fairness 360 - and visualization, involving interactive heatmaps, comparison graphs, and dashboards designed using D3.js and Figma. The project culminates in visual artifacts, analytical documentation, and actionable recommendations, contributing to ethical and inclusive AI discourse.